On January 28, 1986, the space shuttle Challenger broke apart 73 seconds after launch. Seven crew members died. The cause was a rubber O-ring—a seal designed to prevent hot gases from escaping the solid rocket boosters. The O-ring had worked flawlessly in previous launches, but on an unusually cold Florida morning, it lost flexibility, failed to seal, and triggered a catastrophic chain reaction.

The Challenger disaster became a case study in systems thinking. The O-ring was one of thousands of components, costing pennies compared to engines costing millions. But in a system where every component must work for the system to work, the weakest link determines the outcome. The shuttle wasn't 99% successful because 99% of its parts functioned. It was 0% successful because one part failed.

Seven years later, economist Michael Kremer formalized this insight in "The O-Ring Theory of Economic Development." His core observation: many production processes are multiplicative rather than additive. Output equals the product of component qualities, not their sum. When tasks are quality complements, improving nine of ten components while neglecting the tenth may accomplish nothing. The bottleneck constrains everything.

I've been thinking about O-rings constantly over the past month, as a remarkable convergence of research has reshaped our understanding of how AI affects work. A new National Bureau of Economic Research (NBER) working paper from Joshua Gans and Avi Goldfarb—titled, appropriately, "O-Ring Automation"—argues that standard AI workforce projections are built on a foundational error. They assume tasks add together when they actually multiply. And this isn't a minor methodological quibble. It means that virtually every AI productivity forecast you've seen—from McKinsey, from Goldman Sachs, from the consulting firms advising your board—is systematically wrong.

The Foundational Error

The standard approach to measuring AI's workforce impact follows a simple logic: identify what tasks comprise a job, determine which tasks AI can perform, aggregate the results. This methodology underlies the most cited research in the field—Frey and Osborne's automation probabilities, Webb's patent-text analysis, Felten's AI exposure indices, and Eloundou's GPT-4 assessments.

All of these studies use some version of weighted linear aggregation:

Exposure = Σ (task weight × task automation probability)

If an occupation consists of ten tasks and nine are highly automatable, this formula yields "90% exposed." The implicit assumption is that automating 90% of tasks eliminates roughly 90% of the job's value—or at least 90% of the human contribution.

Gans and Goldfarb's critique is devastating in its simplicity: this math is wrong when tasks are quality complements. Under O-ring production, output is multiplicative:

Y = q₁ × q₂ × q₃ × ... × qₙ

If the tenth task is a binding bottleneck—a task whose quality constrains overall output regardless of performance on the other nine—then automating the other tasks doesn't eliminate 90% of the job. It may not eliminate any of the job. The worker simply reallocates their time to the bottleneck task, potentially performing it at higher quality than before.

This is what Gans and Goldfarb call the "focus mechanism." When some tasks are automated, workers don't lose those tasks and keep everything else constant. They concentrate their fixed time endowment on the remaining tasks. A worker who previously allocated one hour each to ten tasks now allocates two hours each to five tasks. Quality on the remaining tasks increases. And if those remaining tasks are bottlenecks—if they're the binding constraints on output—then total value may actually rise.

Four Research Efforts Converge

The O-ring framework might have remained an elegant theoretical critique if not for three empirical studies published in the past four months. Together, they provide the data to test whether the bottleneck problem is real—and the answer is unambiguous.

OpenAI's GDPval (October 2025) asked the capability question: Can AI perform economically valuable tasks at expert quality? They constructed 1,320 tasks across 44 occupations representing significant GDP contribution, then had professionals with an average of 14 years experience grade AI outputs against human expert outputs in blind evaluations. The headline finding: frontier models like Claude Opus 4.1 achieved a 47.6% win rate against human experts. AI can now match or exceed human performance on roughly half of tested tasks, while operating 100x faster and cheaper.

This is impressive capability research. But notice what it doesn't tell you: whether those tasks are bottlenecks or complements within actual production processes. GDPval measures what AI can do in isolation. It doesn't measure how AI capability translates to workforce outcomes.

Anthropic's Economic Index (January 2026) asked the usage question: How is AI actually being used? They analyzed over a million Claude.ai conversations and a million API transcripts, classifying tasks by complexity, skill requirements, and success rates. The findings were striking: usage is highly concentrated (top 10 task categories account for 24% of all conversations), success rates decline with task complexity (70% for simple tasks, 66% for complex), and AI disproportionately handles higher-education tasks within occupational profiles.

But here's the critical finding: Anthropic tested what happens when you model tasks as complements rather than substitutes. Under standard separable-task assumptions, their data implies 1.8 percentage points of annual productivity growth from AI. When they apply a constant elasticity of substitution framework with σ = 0.5—meaning tasks are complements—that projection drops to 0.7–0.9 percentage points. Add success rate adjustments and you're at 0.6 percentage points.

The adjustment from separable tasks to complementary tasks reduces projected productivity gains by 50-67%. This is the first large-scale empirical confirmation of the O-ring framework's predictions.

Microsoft's AI Diffusion Index (January 2026) asked the adoption question: Who is using AI? Using aggregated telemetry adjusted for device market share and internet penetration, they found global adoption reached 16.3% by the end of 2025. But the geographic distribution is unexpected: the UAE leads at 64% adoption, Singapore at 60.9%, while the United States—despite leading in AI infrastructure and frontier model development—ranks 24th at 28.3%.

Microsoft's data reveals that capability development and adoption leadership are different things. Countries that invested early in policy coordination, trust-building, and institutional preparation (UAE appointed the world's first AI minister in 2017, five years before ChatGPT) are realizing benefits that capability leadership alone cannot deliver.

The Deskilling Puzzle

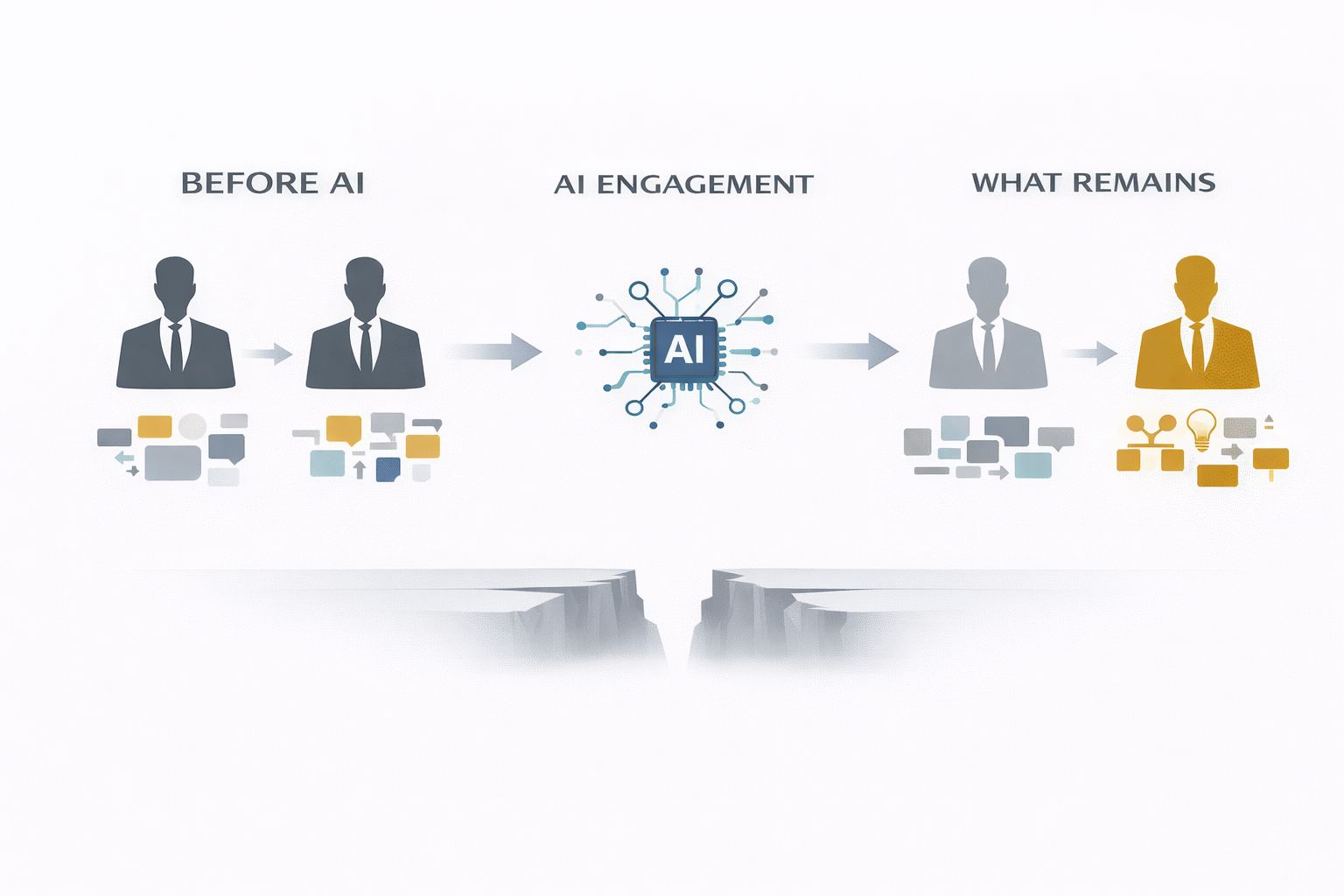

Anthropic's most provocative finding was what they called the "deskilling effect." When you remove AI-covered tasks from occupational profiles, the remaining work requires lower educational attainment on average. AI handles the sophisticated parts; humans keep the routine residual.

Their examples are vivid: Technical writers lose "analyze developments to determine revision needs" (18.7 years average education) while keeping "draw sketches to illustrate materials" (13.6 years). Travel agents lose "plan and sell itinerary packages" (13.5 years) while keeping "print tickets" (12.0 years).

This sounds alarming. But the O-ring framework suggests a more nuanced interpretation. The deskilling effect is real when AI removes high-value tasks and leaves commodity residual. But it reverses—becomes superskilling—when AI removes commodity tasks and leaves genuine judgment bottlenecks.

Consider a property manager whose role includes rent analysis, bookkeeping, maintenance coordination, and CRM management alongside lease negotiations, owner relationships, and strategic asset decisions. AI excels at the first cluster—it's pattern work, sophisticated in details but systematizable. What remains is bottleneck work: negotiating with difficult tenants, managing owner expectations, making strategic decisions that determine whether properties thrive or churn.

For this property manager, AI doesn't deskill—it liberates. They can focus entirely on the judgment work that was always their real contribution, performing it at higher quality and higher volume. The same phenomenon that deskills a technical writer superskills a property manager.

The difference isn't the technology. It's the composition of the role—specifically, whether the tasks AI handles are bottlenecks (deskilling) or complements (superskilling).

The Diagnostic Gap

Here is the problem with the current research landscape: we now have excellent data on AI capability (GDPval), usage patterns (Anthropic), adoption breadth (Microsoft), and theoretical frameworks (Gans-Goldfarb). But none of these answers the question that organizations and individuals actually need answered:

"Given my specific role composition, will AI engagement leave me with valuable judgment bottlenecks or commodity residual?"

GDPval tells you AI can write a legal brief at expert quality. It cannot tell you what percentage of a specific lawyer's role involves brief-writing versus client relationships, court appearances, and judgment calls.

Anthropic tells you AI usage concentrates in certain task categories with declining success rates for complexity. It cannot tell you whether the tasks AI handles in your role are bottlenecks or complements.

Microsoft tells you 28.3% of Americans use AI tools. It cannot tell you whether your specific industry, function, or role will experience deskilling or superskilling.

The O-ring theory tells you bottlenecks matter more than average exposure. It cannot tell you which tasks in a specific role are the bottlenecks.

This is the diagnostic gap: the space between macro research findings and micro transformation decisions. The research establishes that composition determines outcomes—but provides no methodology for assessing composition at the level where decisions are actually made.