In 1911, Frederick Winslow Taylor published The Principles of Scientific Management, arguing that work could be decomposed into measurable components, optimized through systematic study, and reassembled into more efficient processes. The book was controversial—critics accused Taylor of reducing workers to mere cogs in a machine—but its influence was undeniable. Within decades, Taylor's methods had transformed manufacturing, and eventually, extended into the knowledge work that would come to define the twentieth-century corporation.

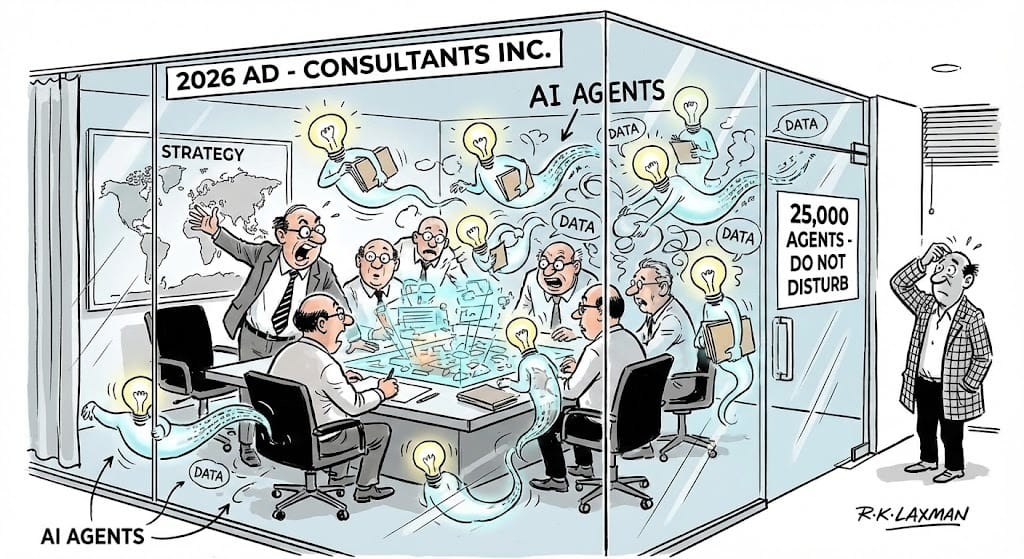

I've been thinking about Taylor lately, not because his methods are newly relevant, but because we're witnessing their logical conclusion play out at the world's most prestigious consulting firm. McKinsey & Company—the institution that arguably did more than any other to industrialize management thinking itself—has just revealed that it now employs 25,000 AI agents alongside 40,000 humans. The ratio, which was closer to 3,000 agents just eighteen months ago, is expected to approach parity by year's end.

The headlines have focused on the automation angle: consultants replaced by machines, white-collar work under siege, the robots finally coming for the elite. But I think this framing misses what's actually interesting about McKinsey's disclosure. What we're seeing isn't the automation of consulting—it's the revelation of what consulting work actually consisted of all along.

Bob Sternfels, McKinsey's global managing partner, has been remarkably candid about the transformation underway. Speaking on the Harvard Business Review IdeaCast, he described a workforce of 60,000: 40,000 humans and 20,000 AI agents. Days later at CES, he updated that figure to 25,000 agents, a number McKinsey subsequently confirmed to Business Insider.

But the more revealing statistic came from the firm's internal deployment data. Since Lilli, McKinsey's generative AI platform, rolled out firm-wide in July 2023, 72% of employees have become active users, logging over 500,000 prompts monthly. The reported efficiency gain: up to 30% time savings in "searching and synthesizing knowledge."

That phrase deserves examination. Searching through documents is, by definition, pattern work—applying known criteria to find relevant information. Synthesizing information is largely pattern work—combining inputs according to established frameworks to produce structured outputs. The work that junior consultants traditionally spent "lots of time on," as one McKinsey leader put it—creating slide decks, compiling charts, applying best practices—follows, almost by definition, predictable patterns.

The firm reportedly saved 1.5 million hours in 2025 through AI. That's 1.5 million hours of what was presumably considered professional expertise, now revealed to be pattern-matching that machines can do faster.

This is not a criticism of McKinsey's consultants. It's a structural observation about what systematized knowledge work actually consists of—and it carries implications far beyond consulting.